Let's cut through the noise.

Google's stance on AI-generated content has evolved significantly, and the confusion is understandable. Between algorithm updates, new spam policies, and conflicting advice across the web, marketers are left wondering: Can I actually use AI to create content without tanking my rankings?

The short answer is yes—but with important caveats.

Google doesn't penalize content simply because AI helped create it. What matters is whether that content serves your audience or exists purely to manipulate search rankings [1]. The distinction sounds simple, but the practical application trips up countless marketing teams.

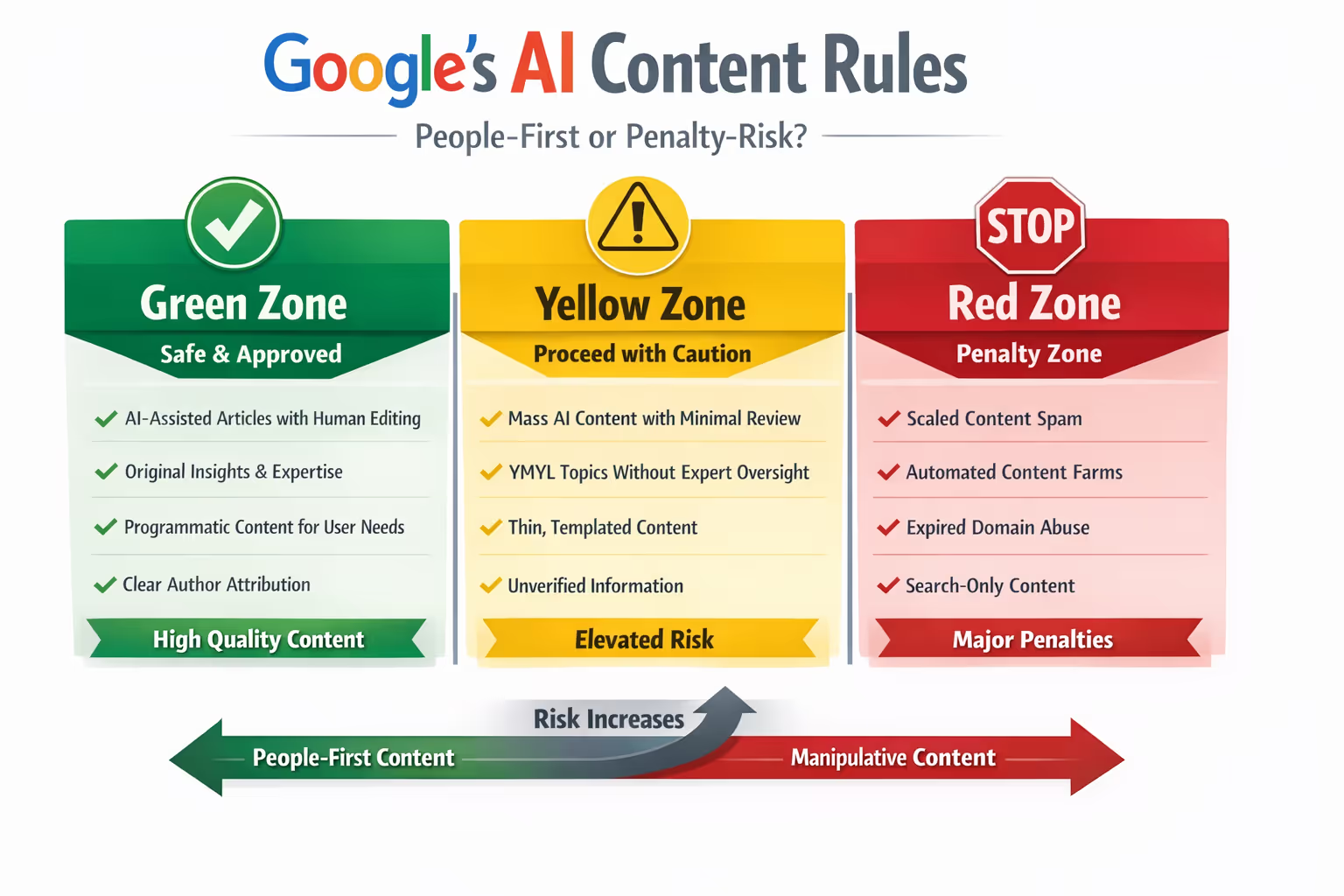

This guide breaks down Google's official AI-generated content rules into actionable publishing behaviors. We'll translate policy language into a clear green/yellow/red framework you can apply today. No guesswork. No hedging. Just the operational clarity you need to publish confidently.

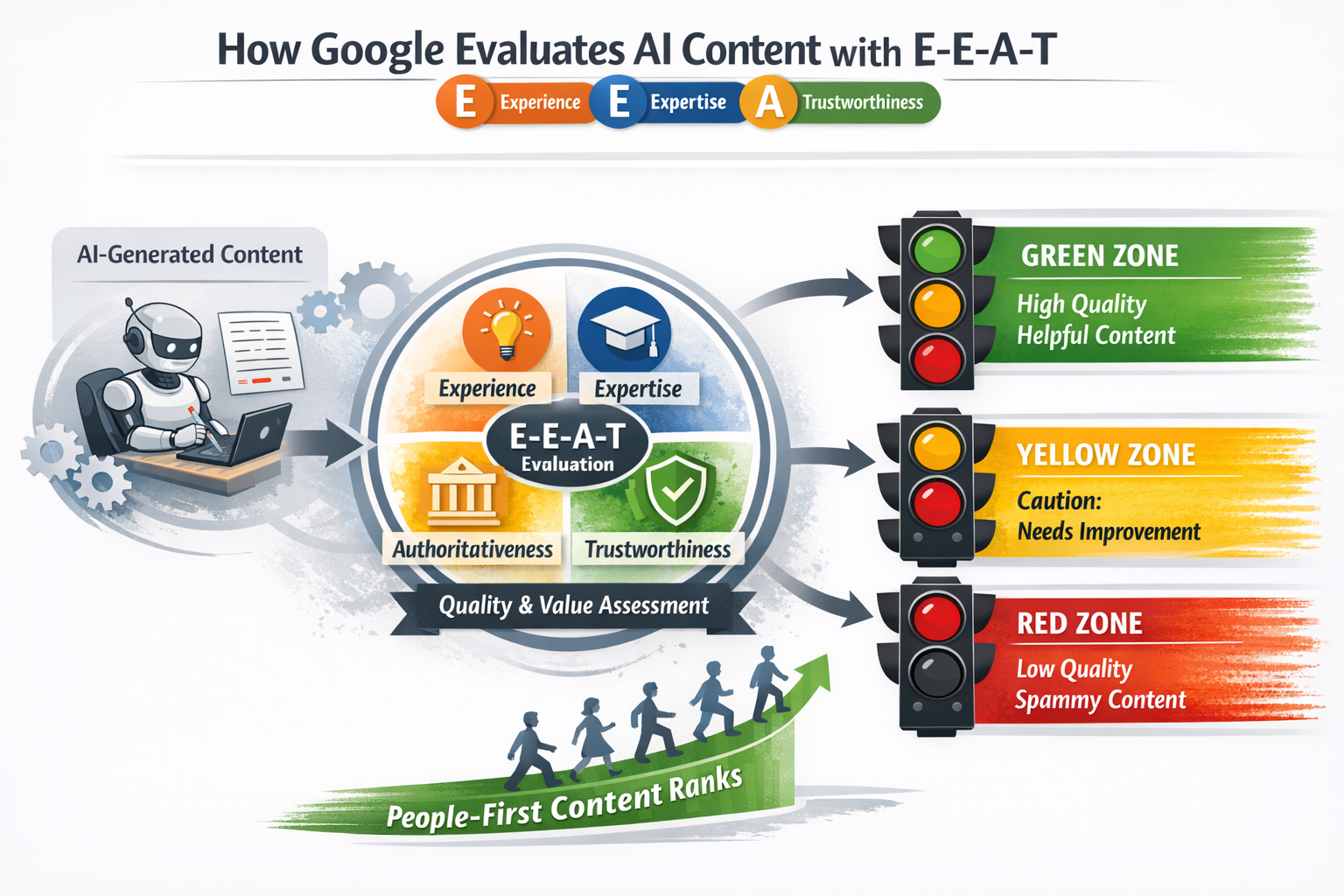

How Google Actually Evaluates AI Content

Google's quality raters and algorithms don't have an "AI detector" that automatically flags machine-generated text. Instead, they evaluate content using the same E-E-A-T criteria applied to all content: Experience, Expertise, Authoritativeness, and Trustworthiness [2].

Here's what that means practically.

A well-researched, genuinely helpful article written with AI assistance ranks just as well as one written entirely by humans—assuming it meets quality standards. Conversely, low-quality human-written content fails just as spectacularly as low-quality AI content.

The April 2024 core update reinforced this position while adding sharper teeth. Google began targeting what they call "scaled content abuse"—the practice of producing large volumes of low-value content regardless of whether humans or machines created it [3].

The penalty isn't for using AI. It's for using any method to flood the web with unhelpful content.

The Green/Yellow/Red Framework for AI Publishing

Understanding policy is one thing. Knowing what to do Monday morning is another.

This framework categorizes common AI content practices into three zones based on Google's guidance and observed enforcement patterns.

Green Zone: Approved Publishing Behaviors

These practices align with Google's stated policies and carry minimal risk:

Using AI as a research and drafting assistant while adding original insights, analysis, or expertise

AI-assisted content with substantive human editing that improves accuracy, adds nuance, and ensures factual correctness

Programmatic content serving genuine user needs—think product descriptions with unique specifications or localized information pages with area-specific data

AI tools for ideation, outlining, and structure when human judgment shapes the final output

Clearly bylined content with author expertise where AI speeds up production but doesn't replace subject matter knowledge

The common thread? Human judgment and expertise remain central to the content's value.

Yellow Zone: Proceed with Caution

These practices exist in a gray area. They're not automatically penalized, but they carry elevated risk:

High-volume AI content without consistent editorial review—even if individual pieces seem fine, patterns of thin content attract scrutiny

AI-generated content in YMYL (Your Money or Your Life) topics like health, finance, or legal matters without expert verification

Templated AI content with minimal customization across multiple pages or sites

Content that technically answers queries but lacks depth, original perspective, or practical value

AI content published without fact-checking or source verification

Yellow zone activities aren't necessarily spam. But they're one algorithm update away from causing serious problems.

Red Zone: Content That Triggers Penalties

These practices directly violate Google's spam policies:

Scaled content abuse: producing massive volumes of content primarily to manipulate search rankings rather than help users [3]

Site reputation abuse: publishing third-party content designed to exploit a domain's ranking signals

Expired domain abuse: purchasing old domains to host low-quality content hoping to inherit authority

AI-generated content with no editorial oversight whatsoever—purely automated publishing pipelines

Content that exists solely for search engines with no genuine value for human readers

Intentionally obscuring AI use in contexts where disclosure affects trust (sponsored content, reviews, news)

When Google identifies these patterns, the response is typically a manual action or significant ranking demotion.

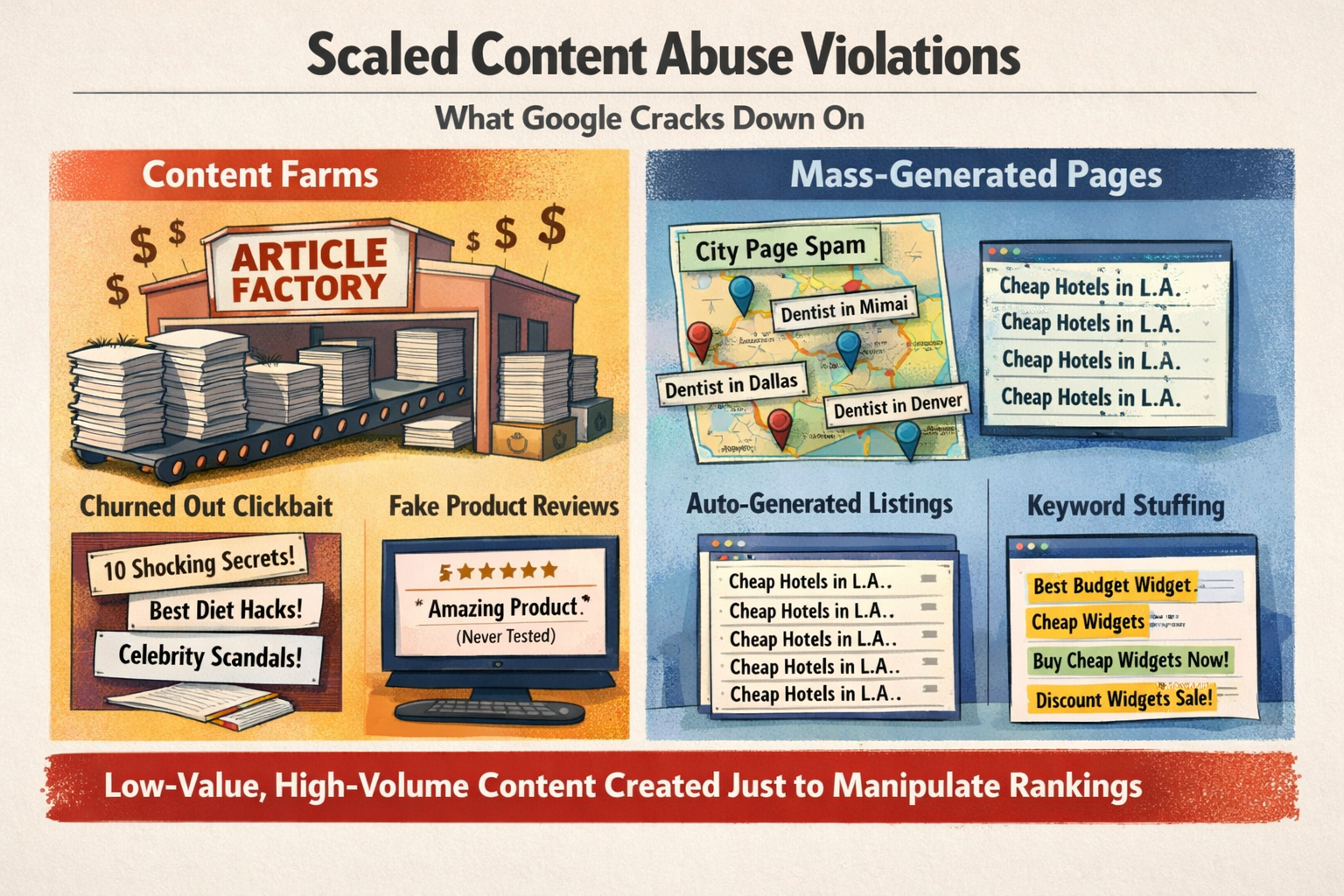

What "Scaled Content Abuse" Actually Looks Like

Google's spam policies specifically call out scaled content abuse, but the documentation doesn't provide many concrete examples [3]. Let's fix that.

Scaled content abuse includes:

Publishing hundreds of location pages with only the city name swapped out

Generating product "reviews" for items never tested or used

Creating content farms that churn out articles across hundreds of topics with no subject matter expertise

Producing "comparison" content that summarizes other articles without adding analysis

Auto-generating pages targeting every conceivable long-tail keyword variation

It does NOT include:

Using AI to help write genuinely helpful content at a sustainable pace

Creating programmatic pages with unique, valuable data (real estate listings, product specifications with accurate details)

Publishing consistently on topics where you have legitimate expertise

Using AI to improve writing efficiency while maintaining quality standards

The distinction centers on intent and value. Are you creating content to help people, using AI as a tool? Or are you using AI to produce content that exists purely for rankings?

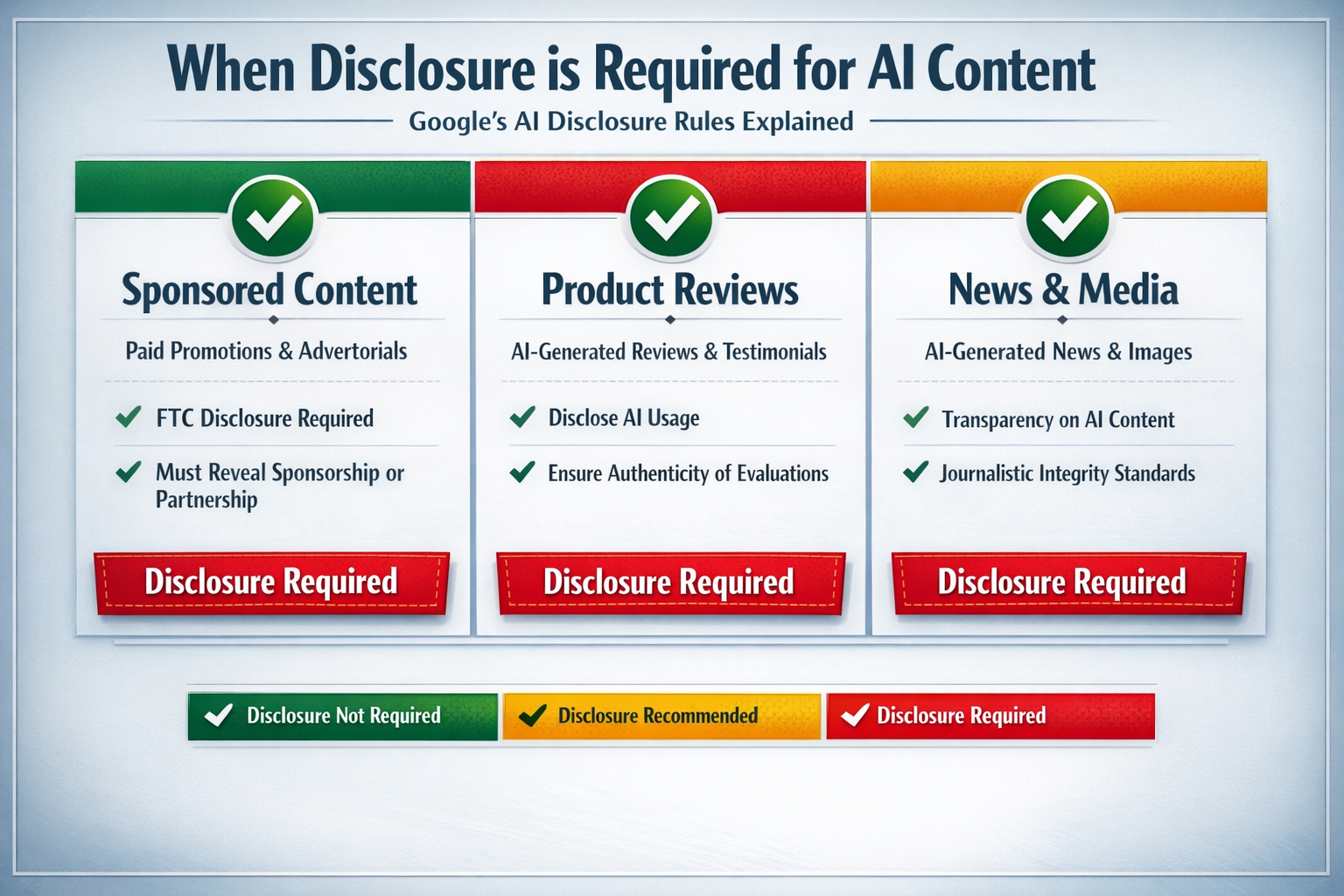

Google's Disclosure Requirements: What You Must Reveal

Here's where many publishers get confused.

Google does not require you to disclose AI involvement in standard content. You won't find a policy demanding "This article was written with ChatGPT" disclaimers [1].

However, disclosure becomes necessary—and sometimes legally required—in specific contexts:

Disclosure required:

Sponsored or paid content: FTC guidelines require disclosure regardless of who or what created the content [4]

AI-generated images or media that could mislead viewers about authenticity

News content where journalistic standards apply

Product reviews where authenticity expectations exist

Any context where AI involvement affects trust and a reasonable person would want to know

Disclosure recommended:

Content in industries where readers care about the author's direct experience

YMYL topics where human expertise provides reassurance

Situations where your audience has expressed preference for transparency

Disclosure optional:

Standard informational blog posts

Marketing content where the value speaks for itself

Internal documentation or operational content

The guiding principle: if a reader would feel deceived upon learning AI was involved, you should disclose. If AI simply helped you work more efficiently without affecting the content's trustworthiness, disclosure is your choice.

The People-First Content Self-Assessment

Google published a set of self-assessment questions to help creators evaluate their content [2]. These questions reveal more about quality expectations than any policy document.

Before publishing AI-assisted content, ask yourself:

Experience questions:

Does this content demonstrate first-hand experience with the topic?

Would someone reading this feel the author has actually done, used, or experienced what they're describing?

Expertise questions:

Was this content reviewed by someone with genuine subject matter knowledge?

Does it reflect the depth of understanding an expert would bring?

Value questions:

After reading this, will someone feel they've learned enough to achieve their goal?

Does this content provide substantial value compared to other pages in search results?

Would you feel comfortable showing this to a client, boss, or friend?

Trust questions:

Are claims supported by sources, evidence, or expertise?

Would someone trust this content enough to act on it?

Does the content feel authoritative, or does it read like someone summarizing other sources?

If you hesitate on any of these questions, your content likely falls in the yellow zone at best.

How to Move from Yellow Zone to Green Zone

Most marketers aren't creating spam. They're creating content that's almost good enough—but lacks the polish that separates helpful content from filler.

Moving from yellow to green typically requires:

Adding genuine expertise. AI can research and structure information. It struggles to add the nuance, context, and practical insights that come from real experience. Every piece needs that human layer.

Rigorous fact-checking. AI models confidently state incorrect information. Every statistic, claim, and recommendation needs verification before publication.

Original analysis or perspective. If your content only summarizes what's already ranking, why would Google show it? Add something new—a framework, a contrarian take, practical applications competitors missed.

Quality editing. Not just proofreading. Substantive editing that improves clarity, removes fluff, tightens arguments, and ensures the content actually delivers on its headline promise.

Proper sourcing. Cite authoritative sources. Link to original research. Demonstrate that claims rest on more than AI's pattern-matching abilities.

This is precisely where most AI content fails. The generation happens; the refinement doesn't.

Building a Sustainable AI Content Process

The goal isn't to avoid AI—it's to use AI in ways that consistently produce genuinely helpful content.

A sustainable process looks like this:

Strategic topic selection based on keyword research and audience needs, not just search volume

AI-assisted research and drafting to accelerate the initial content creation

Expert review and enhancement where human judgment adds depth, corrects errors, and ensures accuracy

Editorial quality control that enforces consistency, readability, and brand voice

Performance monitoring to identify what's working and what needs improvement

Skip any step, and you're gambling with your search visibility.

The teams that thrive with AI content aren't the ones publishing the most. They're the ones who've built systems ensuring every piece meets quality thresholds before going live.

Start Publishing Confidently with AI-Assisted Content

Google's AI-generated content rules aren't complicated once you understand the core principle: create content for humans first, using whatever tools help you do that effectively.

Stay in the green zone by combining AI efficiency with human expertise. Watch for yellow zone drift when volume pressures mount. And never mistake quantity of content for quality of strategy.

If building that quality control process in-house feels overwhelming, you're not alone. That's exactly why systems that combine AI drafting with human editing and rigorous quality assurance exist—to handle the refinement work that transforms decent AI output into content that genuinely performs.

Ready to publish AI-assisted content that meets Google's standards? Try the Mighty Quill Blog Engine free and see how our AI + human editorial process keeps your content firmly in the green zone.

Frequently Asked Questions

Does Google penalize AI-generated content?

No. Google does not penalize content simply because AI helped create it. Google evaluates all content—human or AI-generated—based on quality, helpfulness, and E-E-A-T criteria. Low-quality content faces ranking penalties regardless of how it was produced. The focus is on whether content serves users, not on the production method [1].

What is scaled content abuse?

Scaled content abuse refers to generating large amounts of content primarily to manipulate search rankings rather than help users. This includes mass-producing low-quality articles, creating hundreds of pages with minimal variation, or using any method (AI or otherwise) to flood search results with unhelpful content. Google explicitly targets this behavior with manual actions [3].

Do I need to disclose when content is AI-generated?

Google doesn't require disclosure for standard AI-assisted content. However, disclosure becomes necessary for sponsored content (per FTC guidelines), AI-generated media that could mislead viewers, and contexts where transparency affects reader trust. When in doubt, consider whether readers would feel deceived learning about AI involvement [4].

How do I know if my AI content meets Google's quality standards?

Use Google's people-first content self-assessment questions. Ask whether your content demonstrates genuine expertise, provides substantial value compared to competitors, and would satisfy someone who clicked through from search results. If the content only summarizes existing information without adding depth or original insight, it likely falls short of quality standards [2].

Can I publish AI content in YMYL (health, finance, legal) topics?

Yes, but with extra caution. YMYL topics face heightened scrutiny because inaccurate information can harm readers. AI-generated content in these areas should always be reviewed by qualified experts, fact-checked thoroughly, and clearly attributed to authoritative sources. Publishing unverified AI content in YMYL niches carries significant ranking and reputational risk.

About The Mighty Quill

The Mighty Quill is an AI-powered blog engine built by marketers who've spent years navigating search algorithm changes and content quality requirements. Our system combines AI drafting efficiency with human editorial oversight—the exact combination Google's guidelines favor. Every article passes through expert review, fact-checking, and quality assurance before reaching your site, ensuring your content stays confidently in the green zone.

Cited Works

[1] Google Search Central — "Google Search's guidance about AI-generated content." https://developers.google.com/search/blog/2023/02/google-search-and-ai-content

[2] Google Search Central — "Creating helpful, reliable, people-first content." https://developers.google.com/search/docs/fundamentals/creating-helpful-content

[3] Google Search Central — "Spam policies for Google web search." https://developers.google.com/search/docs/essentials/spam-policies

[4] Federal Trade Commission — "Disclosures 101 for Social Media Influencers." https://www.ftc.gov/business-guidance/resources/disclosures-101-social-media-influencers